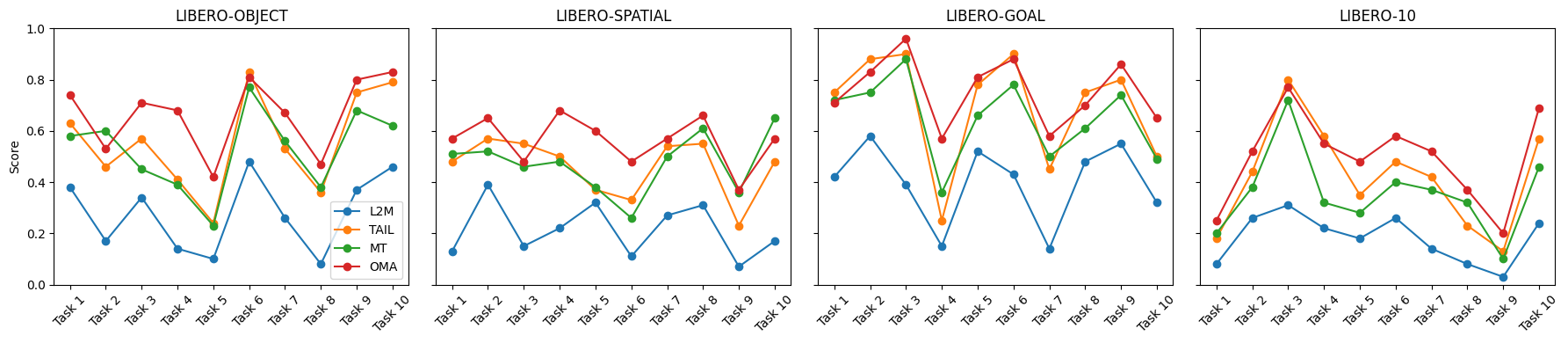

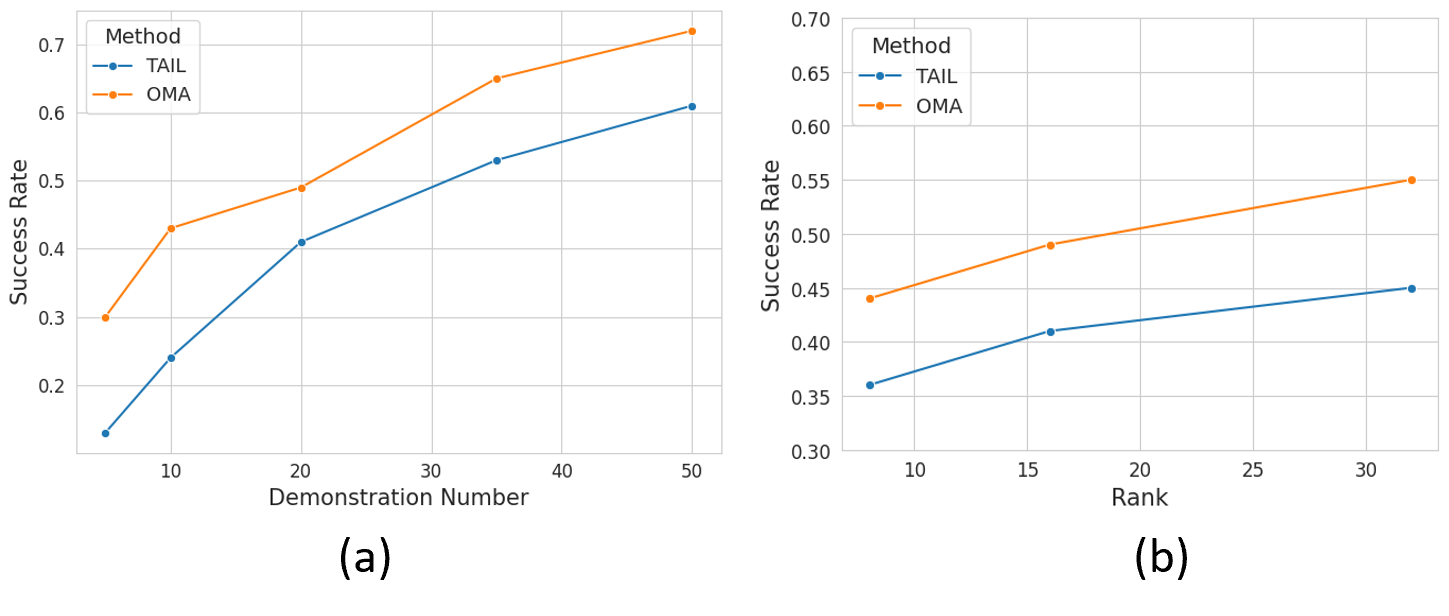

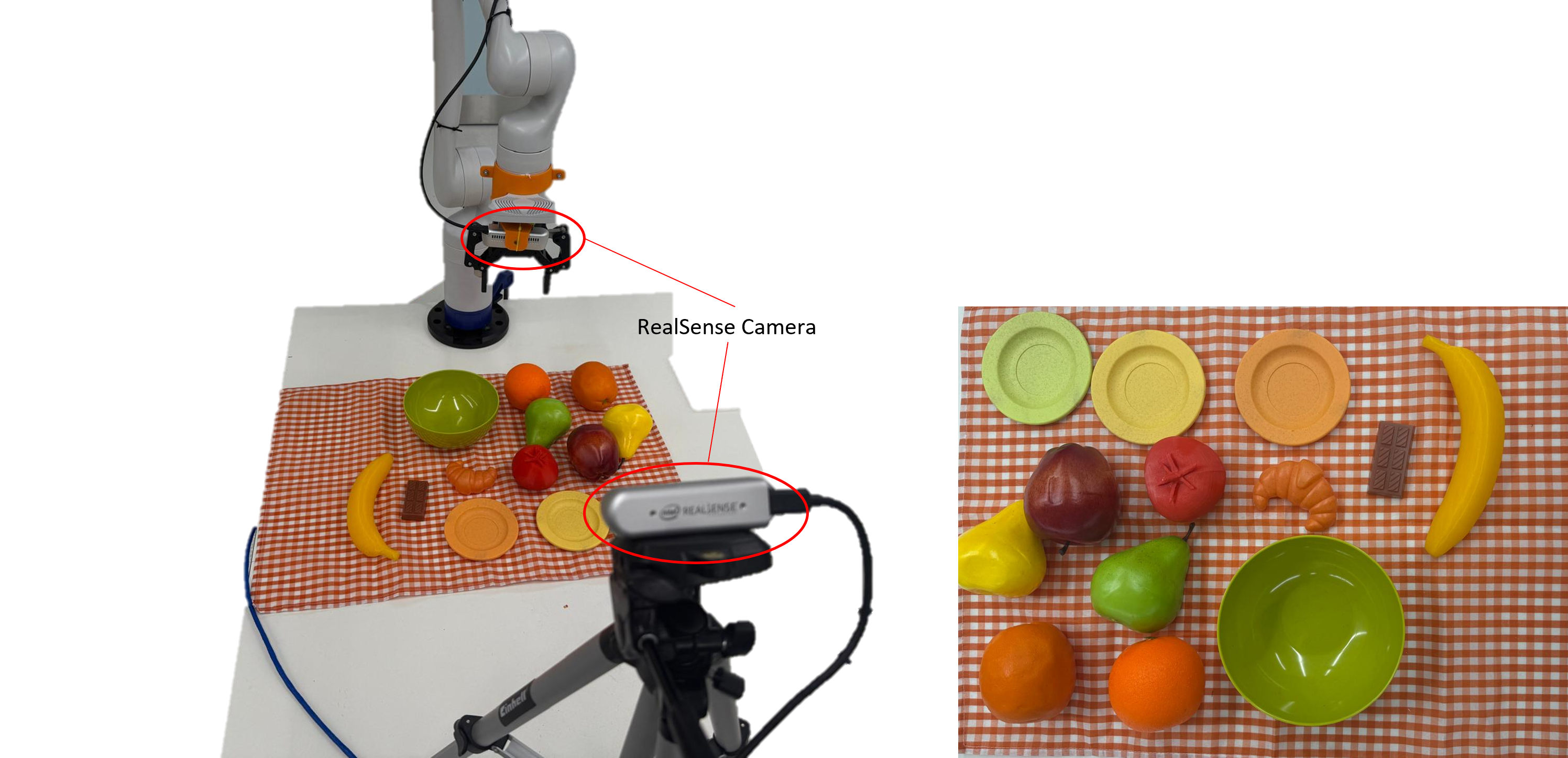

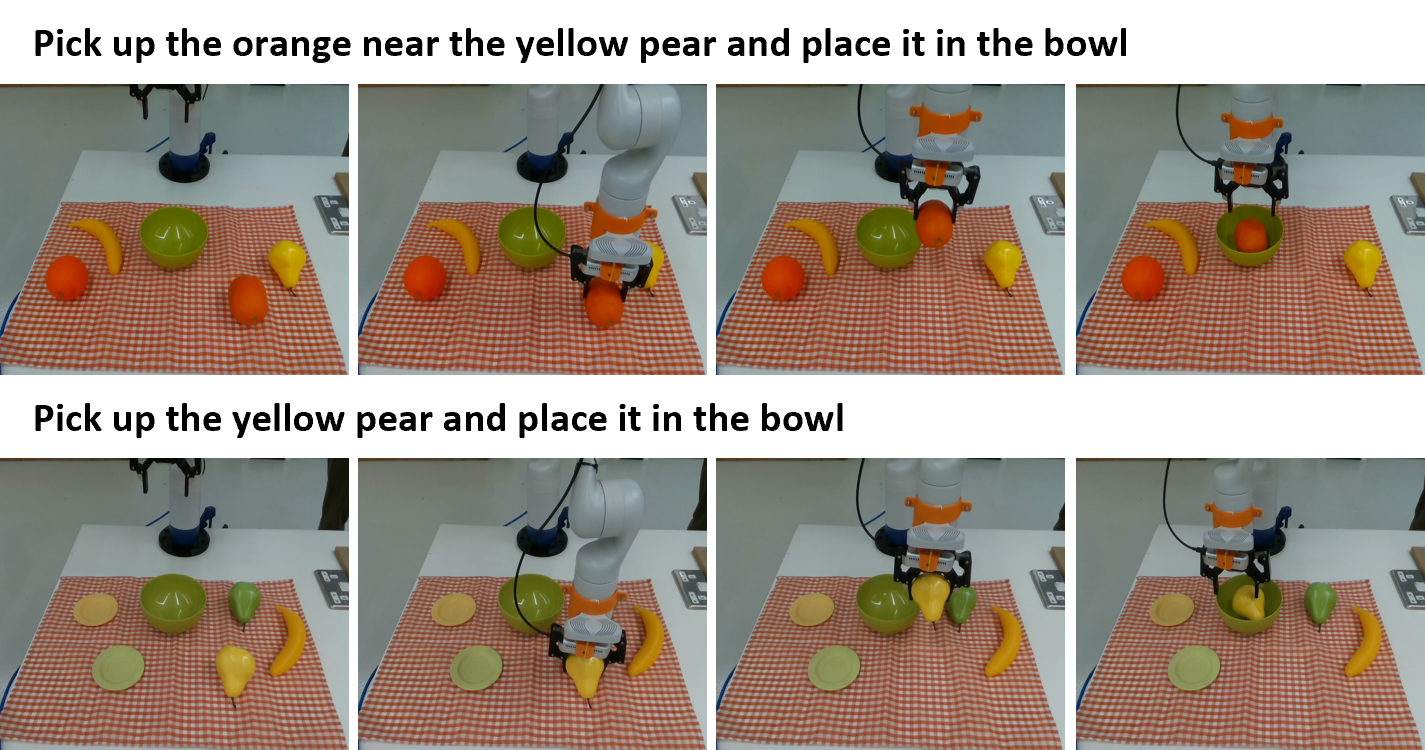

Continual adaptation is essential for general autonomous agents. For example, a household robot pretrained with a repertoire of skills must still learn and adapt to unseen tasks specific to each household. However, prior works mainly emphasize either effective pretraining of models for decision-making or single-task adaptation. Recognizing this, building upon parameter-efficient fine-tuning in language models, recent works have explored lightweight adapters to adapt pretrained policies, which can preserve learned features from the pretraining phase and demonstrate good adaptation performances. However, these approaches treat task learning separately and overlook the underlying relationships between new tasks and prior tasks, limiting the knowledge transfer. In this paper, we propose Online Meta-Adapters (OMA) for continual imitation learning. Rather than applying adapters directly, OMA employs a meta-learning objective to capture transferable priors from prior tasks, thereby accelerating adaptation to new tasks. Extensive experiments in both simulated and real-world environments demonstrate that OMA can lead to better adaptation performances compared to the baseline methods.